FALL DETECTION

Fall Detection Prototype

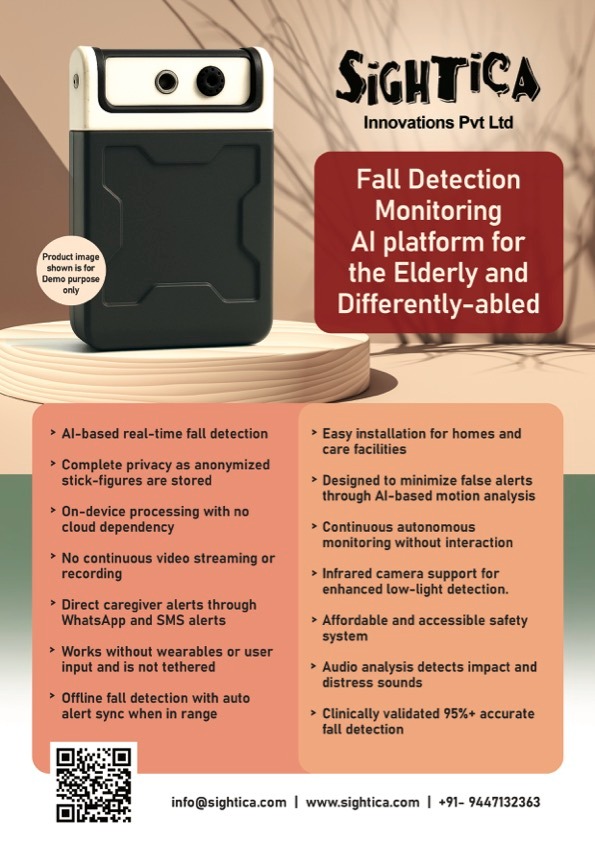

We detect and track users using a Multi-Modal Fusion of RGB and infrared cameras with audio sensors.

The system utilizes MoveNet Pose Estimation and Computer Vision algorithms to analyze posture dynamics.

Designed to sync seamlessly with mobile apps, the system captures privacy-protected event snapshots and

shares instant alerts with families, caregivers, and hospitals via WhatsApp and SMS.

AI & Machine Learning is used with dual-camera technology (RGB + IR) to study behavioral patterns

in a home or defined space. Algorithms like YOLOv10 and MoveNet analyze daily movements to build data sets,

helping to understand anomalies such as a skid, fall, or prolonged absence in a room and trigger an immediate

alert if a critical event is confirmed.

Prototype

We use ‘MoveNet’, a high-speed pose estimation model that extracts 17 keypoints for precise posture analysis, optimized for real-time performance on edge devices. For the Prototype, we utilize OpenCV and a dual-camera setup to ensure accuracy in all lighting conditions. The solution runs locally on a Raspberry Pi 5, delivering privacy-first, low-latency analytics to detect anomalies and falls without cloud dependence.

Audio Identification

We ensure high reliability using a robust Machine Learning Audio Classification system designed to differentiate human falls from sudden noises. We preprocess audio to extract spectral features including Mel frequency cepstral coefficients (MFCCs), Zero Crossing Rate, and Spectral Centroid. A Random Forest Ensemble classifier processes these features using a diverse training dataset of falls and hard negative sounds like dropped objects to minimize false alarms and ensure accurate real-time detection.

Neural Network at Work

Our custom Temporal Shift Module (TSM)–inspired 2D CNN classifier processes the keypoint data extracted by MoveNet to recognize dynamic movement patterns. This lightweight architecture effectively models time-based actions (like a sudden fall) without the computational weight of 3D CNNs. We use the Raspberry Pi 5, featuring a quad-core Arm Cortex-A76 processor, to handle simultaneous keypoint extraction and classification logic locally at the edge.